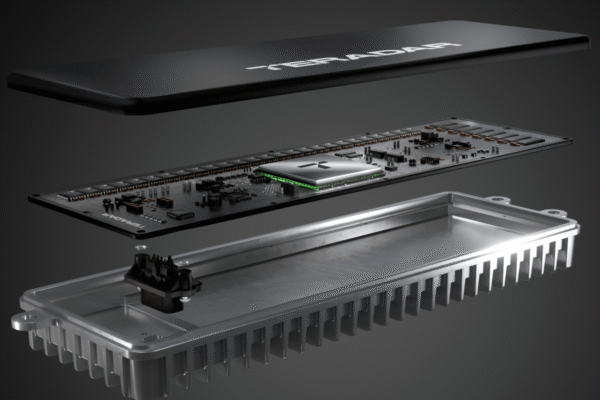

Teradar raises $150M for a sensor it says beats lidar and radar

Matt Carey, the co-founder and CEO of Boston-based startup Teradar loves when people tell him: “I don’t believe you.” That’s “right where we want folks,” he recently told TechCrunch. Carey has spent the last few years quietly building a solid-state sensor that sees the world using the terahertz band of the electromagnetic spectrum, which sits…